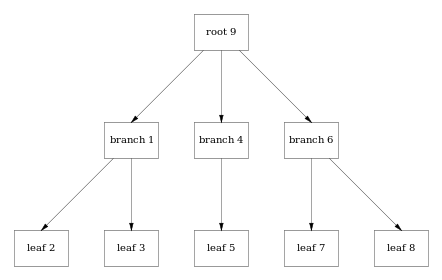

Consider this 3-level b+tree. There are two levels of branch pages (the root is a branch page), and 5 leaf pages. Data and keys are stored in the leaf pages.

Leaf chaining, ie links between leaf pages for easy sequential access, is not supported as it would require the whole tree to be rewritten on each update.

The pages are stored sequentially in the database file. Increasing page numbers means increasing file offsets. The meta page includes a pointer to the root page, a SHA1 hash, and statistics counters.

When the file is opened it is scanned backwards page by page to find a valid meta page, and thus the root of the tree.

When updating a value in leaf page 8, instead of changing the page in-place, a whole new page is appended to the file (here as page 12). Because the location of the page is changed, each parent page needs to be updated to point to the new locations.

Leaf page 7 is not affected. A new root page is created as a copy of the previous root page, only the pointer to branch page 6 is updated to point to branch page 11.

Any cursor still having a pointer to root page 9 can traverse the tree unaffected by the change. It will see a consistent snapshot of the database. Dashed pages and pointers in the diagram are still there in the file, they are just not the last version.

In the file, pages are written sequentially by appending new pages to the file. Already written pages are never modified.

After each new generation of the tree is written, there is a meta page pointing to the new root.

Changing one page (leaf page 8) resulted in 4 new pages being appended to the file. This wastes disk space, but writing consecutive pages to disk is more efficient than writing random locations. And there is no need for a transaction log, because the database file is the transaction log.